Multimodal AI is nothing but an AI that can see, hear, read just like how us humans do. It is a type of Artificial Intelligence that can understand data, process it and generate new data based on the data which it had previously collected and processed. Unlike traditional AI models where it was limited to just text input, multi modal AI can basically take in more than one type of data to perform its tasks. By combing multiple forms of data, the Multimodal AI gains a rich understanding of the world.

The data can be in a variety of forms such as:

- Images

- Videos

- Audio

- Text

Following is a high level breakdown of how multimodal AI works:

- Data Input: Multimodal AI systems take in data from multiple sources. For example, a system may need to process both audio and visual data from a video file.

- Data Processing: The Multimodal AI system uses machine learning algorithms to interpret each type of data. For example, it might use natural language processing to understand spoken words, and computer vision to understand visual data.

- Integration: The Multimodal AI system integrates the information from each type of data to form a more complete understanding of the input. For example, it might combine information about what is being said with information about who is speaking and what they are doing.

- Output: The system generates an output based on its understanding of the input. This could be anything from generating a response to a user’s question, to identifying objects in a video, to predicting future events based on past data.

Multimodal AI is particularly useful in scenarios where a single mode of data is not enough to perform a task completely. By combing data from these multiple sources, Multimodal AI gains a richer understanding of the world and it can:

- Handle ambiguity better: Real-world data is often messy and unclear. Multimodal AI can combine information from different sources to make sense of complex situations.

- Boost performance: Combining different data types can lead to more accurate results in tasks like image recognition or sentiment analysis.

- Open doors to new applications: This technology is a game-changer for fields like:

- Content creation: Imagine AI that generates captions perfectly tailored to an image or even creates videos based on your photos.

- Enhanced search experiences: Search engines that can understand the context of your queries, including images and videos.

- Personalized storytelling: AI that writes stories inspired by art or creates poems that capture the emotions in a photograph.

The emergence of multimodal models

The trend has been accelerated by advancements in several key areas such as:

- Natural Language Processing (NLP): NLP helps AI to understand human language. Currently there are NLPs that are capable of processing native languages like Hindi, Malayalam etc.

- Image and Video Analysis: AI is now capable of extracting meaningful data from visuals with amazing accuracy.

- Speech Recognition: AI is getting better in understanding the human speech.

The AI model landscape continues to grow at an exponential phase with new models with amazing capabilities being launched frequently. The latest of the addition being GPT-4O and GPT-4 Turbo.

What does the future hold for Multimodal AI

The potential of Multimodal AI is huge and one can surely expect a lot of transformational apps across various industries and domains as the technology matures.

Here is a glimpse of what the future holds:

- Natural Human-Computer Interaction: Interactions with machines will be in a natural way, using various forms of words, gestures, and text. Big players like Google have already imagined/conceptualized this through Google Classrooms.

- Revolutionizing Education: Personalized learning experiences that cater to different learning styles.

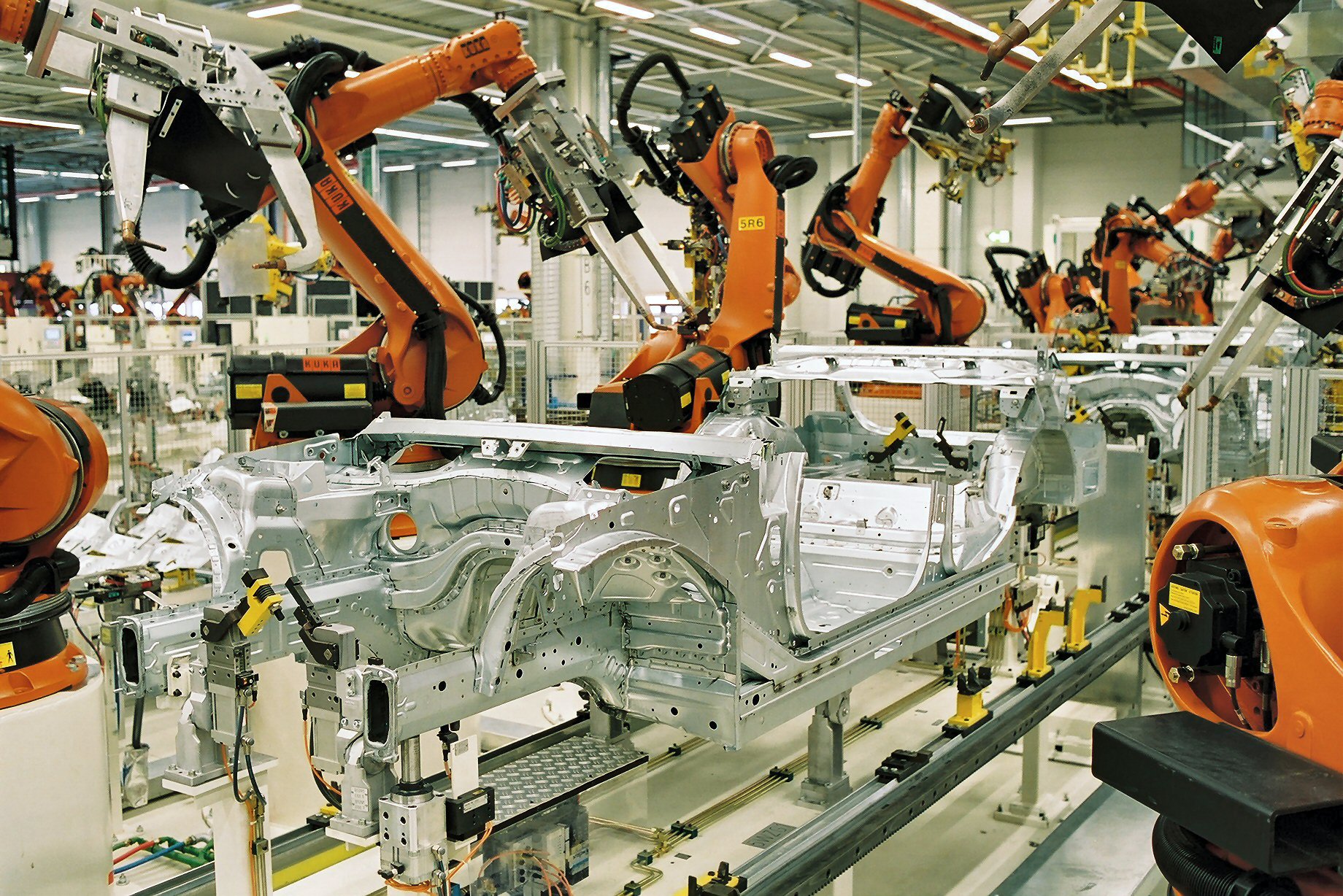

- Advancements in Robotics: Robots that can perceive and respond to their environment in real-time.

Overall, the evolution of Multimodal AI is a significant step towards achieving human-level intelligence in machines. It promises to reshape the way we interact with technology and solve some of the world’s most pressing challenges.

Good Article