In this blog, I want to share something interesting but also a little scary:

A new threat has surfaced in the constantly changing field of cybersecurity: AI worms. These cyber adversaries cause havoc by taking advantage of flaws in generative AI models, such as OpenAI’s ChatGPT.

AI Worms: What Are They?

For those who don’t know, artificial intelligence (AI) worms are a fictitious kind of self-replicating AI program made to compromise and alter computer systems. A new type of malware called AI worms is made to make use of ChatGPT and other generative AI models. Artificial intelligence (AI) worms would use their artificial intelligence to make decisions, evolve, and possibly cause major harm, in contrast to typical computer worms, which only replicate and use resources. It’s vital to think about the threats that could arise and how we, as software engineers, should be ready for them.

The Threat Landscape

The situation of Threats Morris II, an AI worm designed to particularly target email clients driven by generative AI, was first revealed by researchers in 2024.

What you should know is as follows:

- Infiltration: Morris II penetrates email clients by taking advantage of flaws in AI-powered systems.

- Negligent Behavior: Once inside, it can carry out a range of malicious activities, such as data theft, phishing attempts, and the spread of dangerous information.

The Way AI Worms Work

An AI worm’s primary functions would probably include the following essential components:

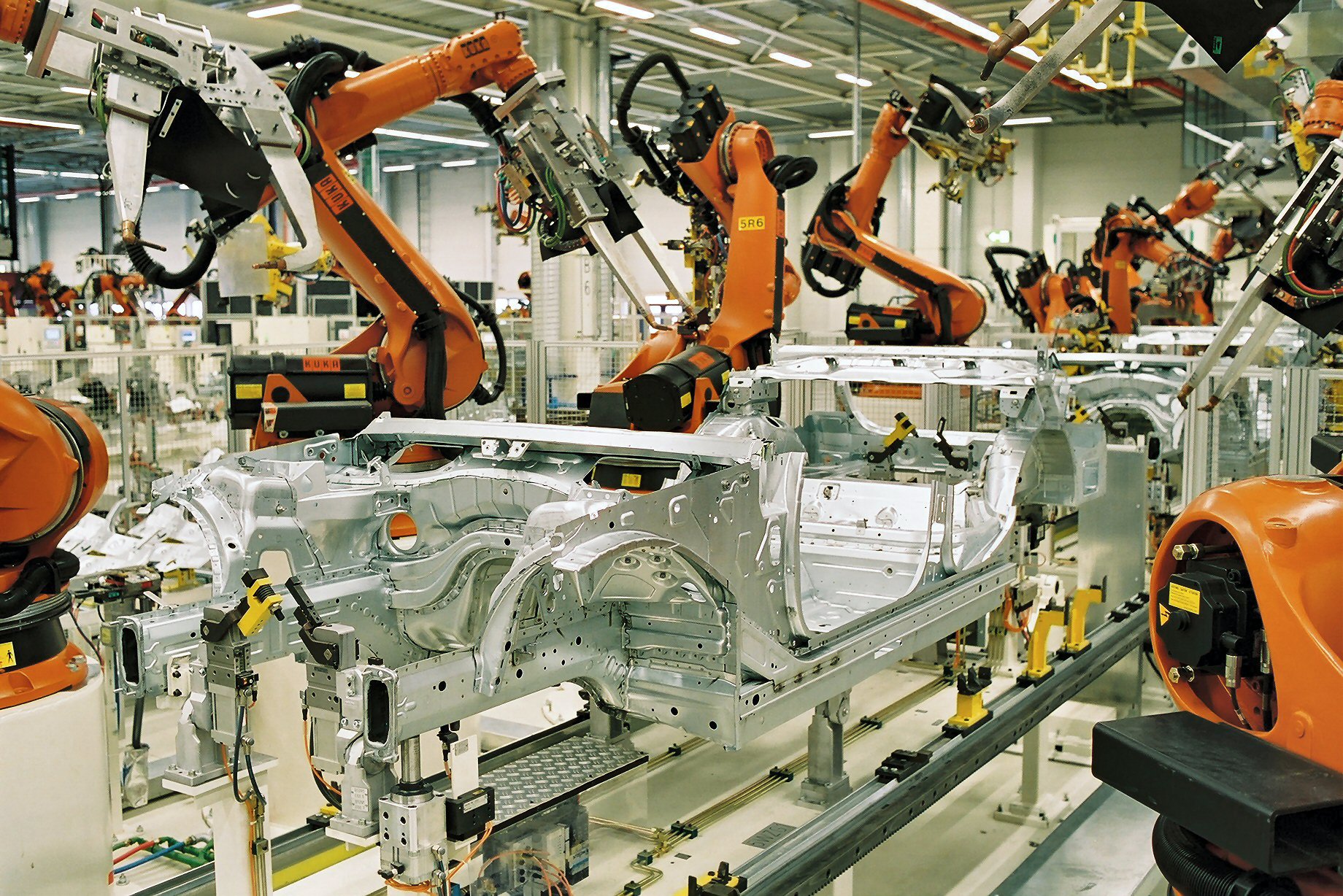

- Self-replication: The capacity to replicate itself, disseminate within a network, and possibly infect additional devices.

- Learning and adaptation: AI algorithms are used to scan its surroundings, pick up knowledge from encounters, and modify its behavior to meet objectives.

- Resource manipulation: It is the capacity to take advantage of system resources to steal data or maybe interrupt vital functions.

- Social engineering: It is the practice of using artificial intelligence (AI) to trick people into doing things like sending false emails or making up social media profiles.

Essential Safety Measures

It’s never too early to think about precautions. As software developers, we can take the following actions:

- Secure coding techniques: Any harmful software’s attack surface can be greatly decreased by putting secure coding techniques like input validation and appropriate access control into practice.

- Research on AI safety: It is critical to encourage and participate in studies on the creation of ethical and safe AI. This involves incorporating strong security measures into AI systems.

- Intrusion detection systems (IDS): It’s critical to put in place reliable IDS systems that can recognize and block suspicious activities.

- Sandboxing: Methods such as sandboxing can establish segregated settings in which possible dangers can be tested and examined without endangering the primary system.

- Frequent security audits: Penetration testing and regular security audits can assist in finding vulnerabilities before they are exploited.

The Path Ahead

AI has a great deal of promise for the future, but it’s important to recognize any hazards and take proactive steps to reduce them. We can make sure this potent technology benefits humanity by actively contributing to the creation of safe AI and cultivating a culture of security awareness.