A Rapidly Shifting Landscape

The Shift No Company Can Ignore

The immersive technology landscape is undergoing a seismic shift. Advances in generative AI and machine learning are reshaping not only how content is created, but also how quickly it can be deployed at scale. From photorealistic digital environments to interactive digital twins, organizations are under growing pressure to prototype faster and deliver increasingly high‑fidelity experiences without sacrificing realism or performance.

This acceleration is creating both opportunity and tension, particularly for enterprises seeking to adopt immersive 3D experiences as part of their core digital strategy.

The Enterprise Challenge with Immersive Content

Across industries, immersive 3D experiences are emerging as a strategic differentiator. Smart campuses, operational digital twins, immersive training, and simulation environments are moving from experimentation into production. Yet for many enterprises, adoption has been constrained by a persistent challenge: 3D content creation remains slow, expensive, and difficult to scale.

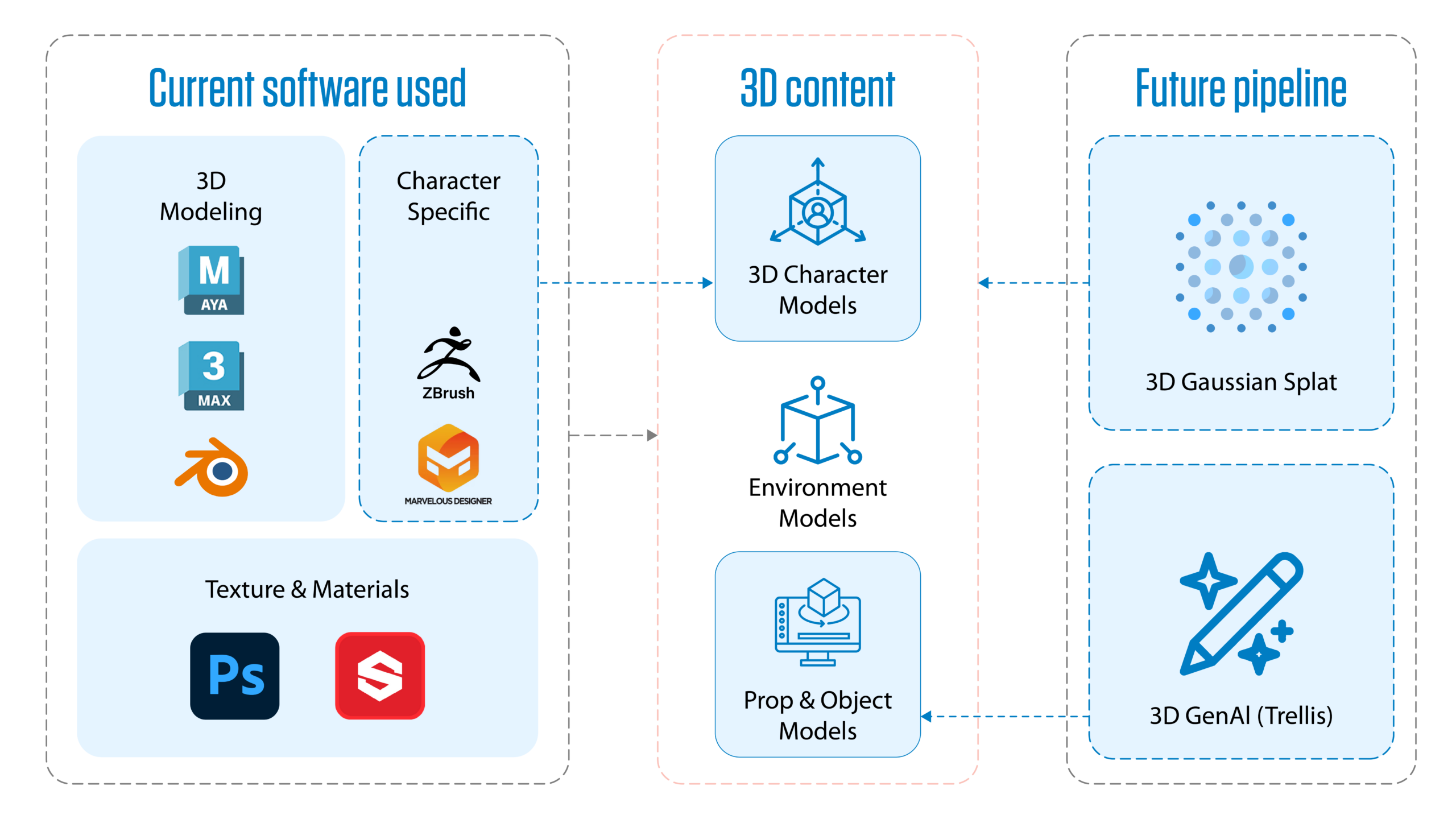

Traditional production pipelines rely heavily on manual 3D modeling, photogrammetry, and asset sourcing. These workflows require specialized expertise, extended production timelines, and extensive optimization to meet real-time performance constraints. While the resulting experiences can be visually compelling, the effort involved often limits iteration, experimentation, and broad enterprise rollout.

Recent advances in generative AI are beginning to change this dynamic fundamentally. Rather than incrementally improving existing workflows, AI is reshaping how 3D content is captured, generated, and deployed across immersive platforms.

The Rise of Generative Content Pipelines

Gaussian Splatting Goes Real-Time (and Why That Matters)

Traditional pipelines using polygonal meshes and relying on photogrammetry or manual 3D modeling are historically slow, expensive, and difficult to scale. This requires expert, specialized skills, long production cycles, and complex optimization to meet real-time rendering needs.

Meet Gaussian Splatting

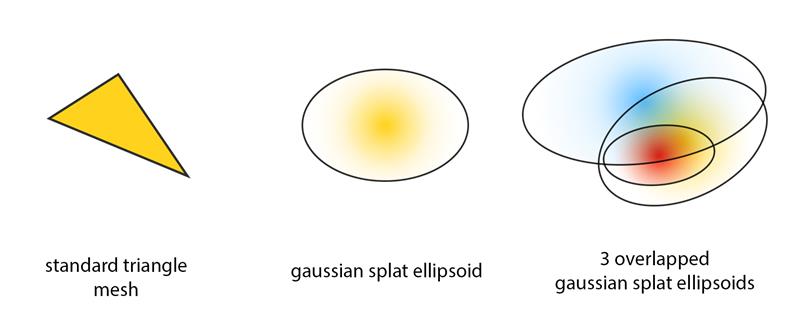

Instead of triangles found with standard polygonal meshes, each Gaussian draws scenes using translucent ellipsoids, and is defined by its position in space, covariance in shape and scale, RGB Color value, and Alpha transparency. These Gaussians are projected onto the screen, often thousands at once, to create fast, photorealistic 3D visualizations. Think of it like painting a hologram with soft points of light, each splat a brushstroke of radiance, blending into form and depth.

How does it Work?

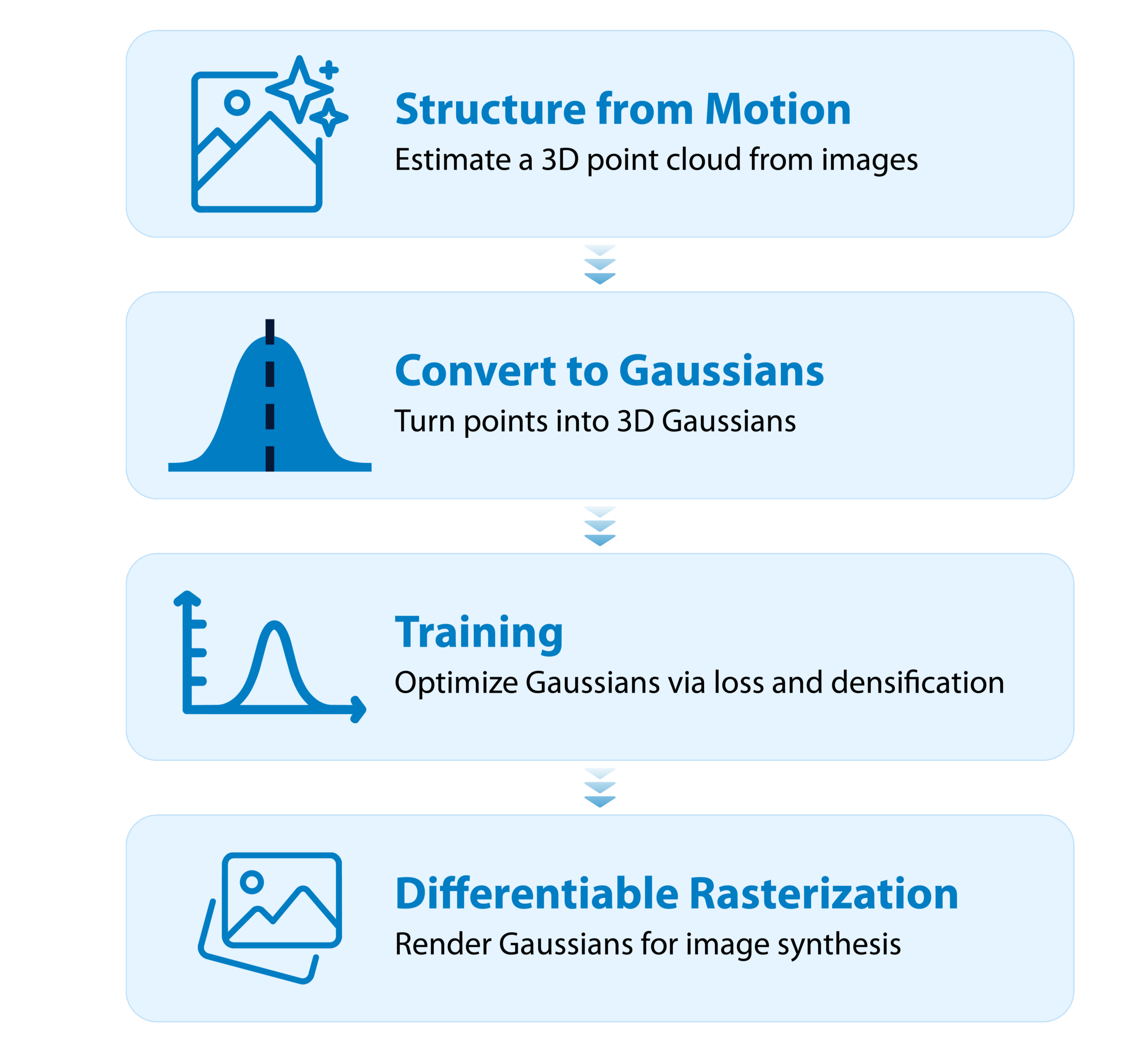

- 3D Gaussian Splatting begins with Structure from Motion (SfM), a computer vision approach that recovers a 3D representation of a scene from multiple 2D images captured from varying viewpoints, such as frames extracted from a video sequence. Open-source tools like COLMAP are commonly used for this reconstruction. It works by detecting visual features across images, like edges or corners, and triangulating them to estimate both camera positions and 3D point locations.

- Next, we convert those points into Gaussians, each with position, color, and size. But to get high-quality results, we train them. The more iterations and time used for training, the more accurate the gaussian is.

- Using differentiable rasterization, the Gaussians are rendered, compared to the original (real ground truth images), and optimized with gradient descent. The system refines the scene by splitting, cloning, or pruning Gaussians based on detail and relevance. Finally, they’re projected, depth-sorted, and blended, enabling fast, photorealistic, real-time, 3D rendering.

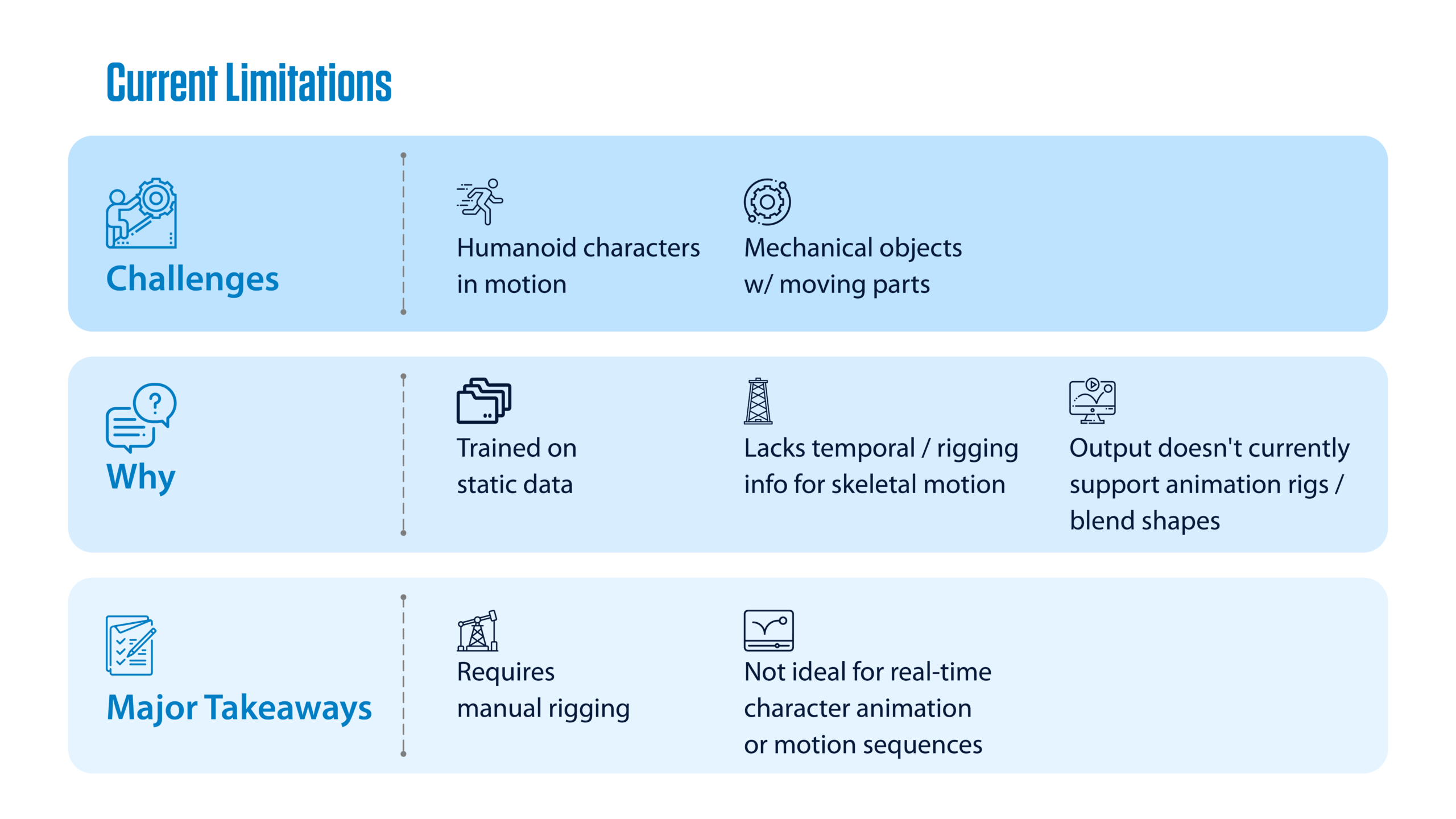

Current Limitations

- Static Scenes Only — Doesn’t support moving objects or dynamic content.

- No Explicit Geometry — Lacks traditional meshes, making interaction, physics, and collisions difficult.

- Limited Editing — Hard to modify or isolate specific elements within the scene.

- View-Dependent Artifacts — Quality may degrade if the scene is captured from limited or narrow angles.

- Tooling Still Emerging — Few standardized tools; workflows are less mature than mesh-based pipelines.

- Challenging Integration — Not yet widely supported in engines like Unity or Unreal.

- Transparency & Blending Issues — Overlapping splats can cause depth-sorting errors and visual artifacts.

Key Takeaways: Gaussian Splatting in Practice

- No need for traditional 3D modeling

- Gaussian Splatting bypasses the need for sculpting, meshing, and manual texturing. What once took teams of artists and days of work can now be accomplished with consumer-grade video capture and AI-powered rendering.

- Lightweight and fast

- Produces high-fidelity 3D scenes with reduced file sizes and faster training times, making it especially suited for WebXR, mobile platforms, and real-time applications.

- Efficient rendering of complex scenes

- Ideal for VR, gaming, and enterprise simulations, Gaussian Splatting enables rapid, photorealistic scene reconstruction with significantly lower computational overhead.

- Accelerates production cycles

- Our team has used splat-based scene capture in prototypes for immersive onboarding and virtual walkthroughs, drastically reducing the time and effort required to create compelling environments.

- Shifting creative authorship

- This isn’t just about speed — it represents a fundamental shift in authorship, where creative control moves from manual construction to curated capture and AI refinement.

- Tooling is early but promising

- While still evolving, 3D Gaussian Splatting is redefining what’s possible. Challenges like depth sorting, relighting, dynamic content, and animation limitations remain — but we’re actively exploring solutions to build a scalable, end-to-end pipeline for next-gen immersive experiences.

Infosys Proof-of-Value: From Weeks to Hours

Infosys has been actively piloting AI-driven 3D pipelines to evaluate their enterprise readiness. One such proof-of-value focused on reimagining how physical spaces can be transformed into immersive digital experiences.

Using an AI-driven reconstruction pipeline, a traditionally labor-intensive environment capture, often requiring several weeks of manual modeling, was completed within hours. The resulting scene preserved photorealism, supported real-time navigation, and integrated seamlessly into an interactive application.

The outcome demonstrated approximately 80% reduction in production time, enabling faster iteration and significantly lowering the barrier to entry for immersive enterprise experiences.

More importantly, it validated a key insight: AI-driven 3D pipelines are no longer experimental, they are viable accelerators for enterprise-scale deployment.

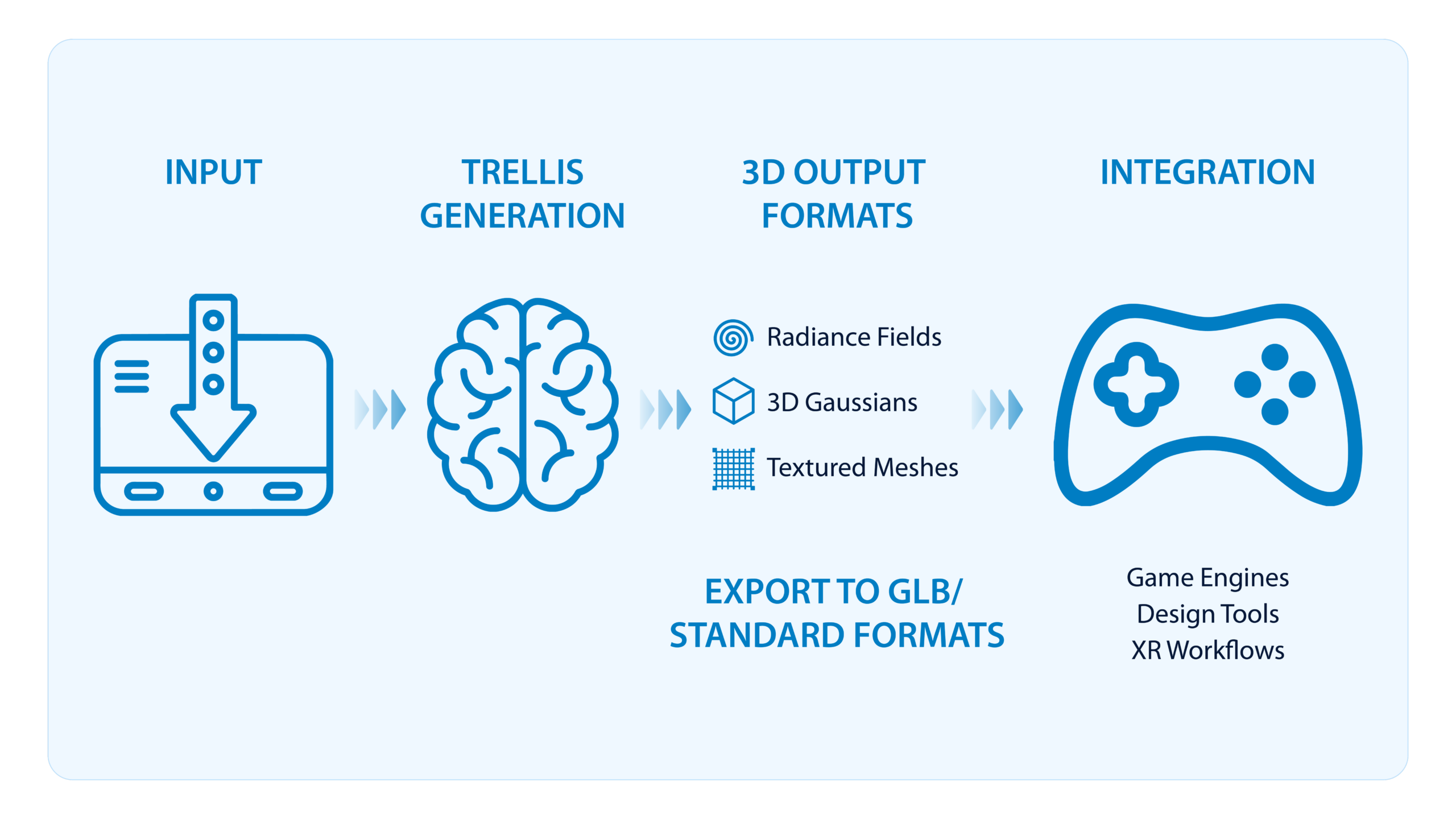

Breaking the Asset Bottleneck with Prompt-to-3D Generation

While scene capture addresses environmental realism, another long-standing bottleneck in immersive development has been asset creation. Sourcing, customizing, and licensing 3D objects often slows down prototyping and limits creative flexibility.

Prompt-to-3D tools are changing that dynamic.

By generating 3D assets directly from text or reference images, these tools enable teams to move from concept to visualization in minutes rather than days. For enterprise teams, this unlocks:

- Faster ideation and design exploration

- On-demand asset customization

- Reduced reliance on stock libraries

- Greater creative control earlier in the design cycle

In Infosys pilots, prompt-to-3D workflows dramatically compressed concept development timelines, allowing designers and architects to validate ideas quickly before committing to production-grade assets.

Why Hybrid Pipelines Matter

Despite their promise, AI-driven techniques are not a wholesale replacement for traditional 3D workflows. Each approach has strengths and limitations.

Gaussian Splatting excels at capturing reality but is best suited for static scenes. Prompt-to-3D generation accelerates asset creation but often requires human-in-the-loop (HITL) refinement before production use. Traditional modeling, on the other hand, remains essential for complex interactions, animation, and dynamic environments.

The most effective approach is therefore hybrid by design:

- AI accelerates capture and ideation

- Human expertise ensures quality, intent, and control

- Established pipelines manage validation, optimization, and governance

This balance allows enterprises to scale immersive content creation without sacrificing reliability or creative intent.

The Human Dimension of AI Adoption

As AI accelerates production, it also reshapes the roles of creators, designers, and developers. Productivity gains are real, but so is the responsibility to ensure sustainable talent development.

Successful enterprises are recognizing that AI does not eliminate the need for creative expertise; it changes how that expertise is applied. Artists and developers evolve from manual builders to curators, orchestrators, and quality stewards within AI-enhanced workflows.

Investing in upskilling, mentorship, and hybrid roles is critical to avoiding short-term efficiency gains that undermine long-term resilience.

What This Means for Enterprise Leaders

For organizations exploring immersive technologies, the implications are clear:

- AI-driven 3D pipelines can dramatically reduce time-to-value

- Early adoption should focus on high-impact, controlled use cases

- Governance, IP validation, and workflow integration remain essential

- Human expertise remains central, AI amplifies, not replaces it

Enterprises that approach generative 3D as a strategic capability, rather than a standalone tool, will be best positioned to scale immersive experiences responsibly and effectively.

Looking Ahead

We are entering an era where immersive experiences can be created at the speed of imagination. As generative AI continues to mature, the question is no longer if enterprises should explore AI-driven 3D pipelines, but how they integrate them thoughtfully into existing ecosystems.

At Infosys, we continue to evaluate, prototype, and refine these pipelines, focused on delivering scalable, enterprise-ready immersive solutions that balance speed, quality, and human creativity.

The workflows are real.

The acceleration is measurable.

And the future of immersive enterprise experiences is already taking shape.