When Machines Learned to Think at Scale: The Rise of AI Supercomputing Platforms

It didn’t happen overnight. At first, machines were fast calculators—excellent at crunching numbers, terrible at understanding meaning. Then came data. Then more data. And suddenly, the world realized something unsettling and exciting at the same time: intelligence doesn’t emerge from algorithms alone—it emerges from scale. That realization gave birth to AI supercomputing platforms.

AI supercomputing platforms are the new power engines of innovation — vast clusters of GPUs, TPUs, and purpose-built hardware designed to train and run the world’s largest AI models and simulations. By unlocking unprecedented scale, speed, and parallel processing, they enable organizations to tackle datasets and compute challenges that were once impossible or painfully slow. Unlike classical systems, these platforms are purpose-built for neural network training, generative AI, and deep pattern discovery, positioning them as the backbone of next-generation AI systems transforming industries end to end.

As AI supercomputing scales train ever-larger models, the energy consumed by data centers has become a central concern, not only for operating cost but also for carbon footprint and sustainability. One key strategy is designing power-aware AI workloads and carbon-aware scheduling that adjust computation and training tasks based on grid carbon intensity and energy cost, which research shows can cut emissions by 20–35% through optimized scheduling and energy-efficient algorithms without major performance loss. Beyond software, advanced cooling technologies play a major role in improving energy efficiency: replacing traditional air cooling with liquid cooling systems can reduce energy consumption significantly — studies report up to ~29% lower energy use and better Power Usage Effectiveness (PUE), lowering both cost and the environmental impact of AI supercomputing. Liquid cooling also improves system reliability and supports higher rack power densities by removing heat directly from CPUs and GPUs with more efficient fluid heat transfer. Going a step further, immersion cooling, where servers are submerged in thermally conductive dielectric fluids, can drive PUE ratios close to ideal and reduce water use and infrastructure footprint while enabling higher sustained performance. These combined approaches — smarter energy scheduling, liquid and immersion cooling — represent the current frontier in making AI supercomputing both powerful and sustainable, aligning advanced computation with broader environmental goals.

Understanding AI Supercomputing Platforms

AI supercomputing platforms are purpose-built computing infrastructures designed to support the massive computational demands of modern artificial intelligence, especially large-scale deep learning and generative AI models. Unlike traditional high-performance computing (HPC) systems that were primarily geared toward scientific simulations, AI supercomputers focus on accelerated matrix math, high memory bandwidth, and distributed data processing to enable training and inference for models with billions or trillions of parameters. These systems typically integrate thousands of GPUs, AI accelerators, high-speed interconnects, and optimized software stacks to deliver unprecedented throughput and scalability.

Why They Matter Today

The rapid rise of generative AI and foundation models has dramatically increased the need for more powerful computing backbones. Modern AI supercomputing platforms are now being deployed globally to support advanced research and industrial applications — from drug discovery and climate simulation to real-time personalization and language models — making them foundational to the next wave of digital innovation. Major players like NVIDIA and cloud providers continue to expand this infrastructure, powering research labs and enterprises with exascale-class systems while pushing efficiency and scalability even further.

Recent Accelerations & Strategic Importance

In the past year, investments and deployments in AI supercomputing have surged. National labs and research institutions across the U.S., Europe, and Asia are unveiling new AI-optimized supercomputers to support cutting-edge scientific and industrial workloads, while companies are building dedicated AI “factories” — massive, purpose-built AI data centers — to train next-generation models faster and more cost-effectively. These developments reflect a broader shift toward AI at scale, with supercomputing platforms positioned at the center of the global AI infrastructure race.

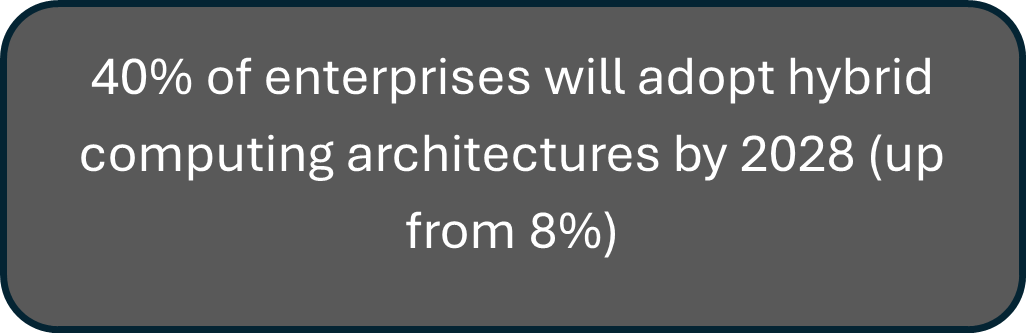

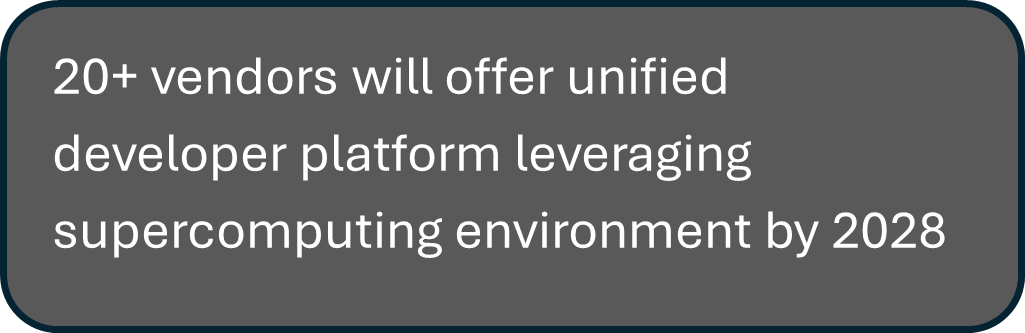

Statistics

According to Gartner

2. The Evolution of AI Supercomputing

2.1 Early High-Performance Computing vs. Modern AI Needs

Traditional HPC systems were designed for deterministic, physics-based simulations relying heavily on CPUs and tightly coupled architectures. Modern AI workloads, in contrast, demand massive parallelism, high memory bandwidth, and efficient handling of probabilistic, data-intensive computations, pushing beyond classical HPC design assumptions.

2.2 Shift from CPU-Centric to Accelerator-Centric Architectures

The exponential growth of deep learning models has driven a transition from CPU-dominated systems to accelerator-centric designs using GPUs, TPUs, and custom AI chips. These accelerators optimize matrix operations and tensor processing, delivering significantly higher performance and energy efficiency for training and inference workloads.

2.3 The Rise of Distributed AI Training and Inference Systems

As AI models scale to billions or trillions of parameters, single-node systems are no longer sufficient. Distributed architectures leveraging high-speed interconnects, data parallelism, and model parallelism now underpin AI supercomputing, enabling faster training and real-time inference across geographically distributed environments.

2.4 Key Milestones in AI Supercomputing Development

Major milestones include the introduction of GPUs for deep learning, the launch of domain-specific accelerators like Google’s TPU, advances in high-bandwidth memory and interconnects, and the emergence of cloud-based AI supercomputers. Together, these developments have redefined compute scalability, performance, and accessibility for AI innovation.

AI Supercomputing Innovations

1. Microsoft, OpenAI Partnership, and Azure’s AI Infrastructure

Microsoft’s multibillion-dollar partnership with OpenAI has driven the development of specialized AI supercomputing infrastructure on Azure, accelerating breakthrough AI research.

Azure’s evolution into a large-scale AI supercomputer has democratized access to advanced AI capabilities.

This foundation has enabled category-defining innovations such as GitHub Copilot and DALL·E 2.

2. Google’s TPU and Supercomputing Techniques

Google’s TPU-driven supercomputing approach has redefined AI training, using flexible chip interconnects to efficiently train large-scale language models. With over 90% of its AI workloads running on TPUs, Google continues to push new benchmarks in AI performancx`e and scalability.

3. IBM’s Supercomputer Innovations

IBM’s Vela supercomputer, integrated into IBM Cloud, is purpose-built for foundation model research, featuring a 60-rack system with NVIDIA A100-powered nodes. By leveraging 100Gb Ethernet instead of traditional HPC interconnects, IBM delivers efficient, AI-optimized supercomputing at scale.

The U.K. government’s recent commitment of £225 million ($273 million) to build Isambard-AI underscores its strong push to accelerate advanced AI research. Powered by 5,448 NVIDIA GH200 Grace Hopper Superchips, Isambard-AI is expected to deliver 21 exaflops of AI performance, significantly expanding research and innovation capabilities in the U.K. and beyond. Often compared to the impact of the Industrial Revolution, this investment highlights AI’s transformative potential across manufacturing and multiple industries, marking a decisive milestone in the global AI landscape and setting a strong precedent for future large-scale AI advancements.

Future Roadmap: From Exascale to Intelligence

Autonomous optimization & photonic interconnects

AI supercomputing platforms are evolving toward self-optimizing systems where AI autonomously manages workload scheduling, power efficiency, fault prediction, and hardware utilization at exascale and beyond. Photonic (optical) interconnects are becoming critical enablers, dramatically reducing latency and energy consumption while supporting ultra-high bandwidth data movement between GPUs, accelerators, and memory—key for training trillion-parameter models efficiently.

Convergence with edge and real-time systems

The next phase of AI supercomputing extends beyond centralized data centers to tightly integrate with edge and real-time systems. This convergence enables models to be trained at intelligence-scale in the cloud and deployed seamlessly at the edge for low-latency inference, adaptive learning, and real-time decision-making. It supports use cases such as autonomous systems, industrial AI, and smart retail by creating a continuous feedback loop between large-scale training and real-world execution.

AI supercomputers are expected to revolutionize multiple industry verticals with it’s magic touch. In healthcare, for example, AI supercomputing dramatically accelerates drug discovery and personalized medicine by rapidly screening molecular interactions and predicting treatment outcomes, helping bring therapies to market faster and at lower cost — as seen in partnerships like Eli Lilly with Nvidia to build dedicated AI supercomputers for drug R&D. In automotive and aerospace, these platforms simulate vehicle designs and autonomous systems in silico, improving safety and efficiency long before physical prototypes are built. In finance, they enable real-time risk analysis, fraud detection, and algorithmic trading over huge data streams, while climate science uses them for high-resolution global forecasting. By unlocking faster insights, smarter automation, and deeper predictive power, AI supercomputing is a catalyst for competitive advantage and the next wave of digital transformation across industries.

Case Studies

1. Eli Lilly × NVIDIA – AI Supercomputer for Drug Discovery

Problem: Traditional drug discovery is slow, costly and complex, often taking a decade or more to find, test, and bring new medicines to patients.

Partnership Details: Pharmaceutical leader Eli Lilly partnered with NVIDIA to build a dedicated AI supercomputer — an “AI factory” using NVIDIA’s DGX SuperPOD systems powered by >1,000 Blackwell/B300 GPUs — combining Lilly’s scientific data with NVIDIA’s AI infrastructure and platforms like BioNeMo. Both companies also announced a long-term co-innovation lab with up to $1 billion investment over five years for shared research, model development, and AI workflows.

Solution Offered: The supercomputer trains large AI models on millions of experimental data points to identify promising drug molecules, simulate biological processes, and shorten R&D timelines. It also supports manufacturing, medical imaging, and enterprise AI applications, and offers proprietary AI models via Lilly’s federated platform TuneLab, ensuring data privacy while enabling external biotech access.

Conclusion: This collaboration aims to dramatically accelerate drug discovery, reduce costs and timeline barriers, and build an industry-wide blueprint for AI in life sciences, though real-world clinical impacts and approved drugs may take years to materialize.

2. Recursion Pharmaceuticals × NVIDIA – BioHive-2 AI Supercomputer

Problem: The complexity and scale of biological data in drug discovery require massive compute power to train AI models capable of finding meaningful patterns — far beyond what traditional lab experiments or smaller clusters permit.

Partnership Details: AI-driven biotech company Recursion Pharmaceuticals teamed with NVIDIA, supported by a strategic partnership including a ~$50 million investment, to build BioHive-2, a next-generation AI supercomputer using NVIDIA DGX H100 systems with hundreds of GPUs and high-speed interconnects.

Solution Offered: BioHive-2, now one of the most powerful systems owned by any pharmaceutical company and ranked in the TOP500 supercomputer list, massively accelerates Recursion’s ability to train large foundation models across biology and chemistry using its vast proprietary datasets. With this scale, Recursion can execute multiple high-complexity AI tasks in parallel — from cellular image analysis (e.g., Phenom-1 models) to protein interaction predictions — far faster than before.

Conclusion: By combining Recursion’s data platform and AI-first approach with NVIDIA’s supercomputing, BioHive-2 significantly expands AI-driven drug research capabilities, helping industrialize discovery workflows and enabling new biological insights at speeds not previously possible.

In a nutshell, AI supercomputer platforms are rapidly becoming the digital backbone of the AI-driven economy, powering breakthroughs that were previously constrained by compute limits. By combining massive GPU/TPU clusters, high-speed interconnects, and AI-optimized software stacks, these platforms enable organizations to train foundation models, run complex simulations, and extract insights from vast datasets at unprecedented speed and scale. From accelerating drug discovery and advancing climate modeling to enabling autonomous systems, real-time financial risk analysis, and next-generation generative AI, AI supercomputers are shifting innovation cycles from years to weeks. As investments grow and access expands through cloud and hybrid models, AI supercomputing platforms will not just support innovation — they will define competitive advantage and reshape how industries build, deploy, and scale intelligence in the decade ahead.

Reference

- https://nvidianews.nvidia.com/news/nvidia-partners-ai-infrastructure-america?utm_source=chatgpt.com

- https://www.e-spincorp.com/why-ai-supercomputing-platforms-are-the-new-digital-backbone/

- https://www.researchgate.net/publication/381580097_AI-coupled_HPC_Workflow_Applications_Middleware_and_Performance

- https://blogs.nvidia.com/blog/uk-largest-ai-supercomputer/#:~:text=The%20U.K.%20government%20has%20announced,by%20the%20University%20of%20Bristol.

- https://datasciencelearningcenter.substack.com/p/microsoft-and-openai-do-the-expected

- https://www.unifiedaihub.com/ai-news/googles-tpus-reshaping-ai-hardware-landscape-challenge-to-nvidia

- https://cambrian-ai.com/ibm-research-touts-ai-supercomputer-for-foundation-ai/

- https://www.reuters.com/business/healthcare-pharmaceuticals/lilly-partners-with-nvidia-ai-supercomputer-speed-up-drug-development-2025-10-28/?utm_source=chatgpt.com

- https://www.opal-rt.com/blog/simulation-first-is-the-new-standard-in-autonomous-vehicle-testing/