Software delivery cycles are shrinking, leaving quality assurance (QA) teams with a critical dilemma: how to maintain rigorous test quality standards while accelerating software release timelines.

Traditional regression testing involves executing thousands of test cases and is often a bottleneck for software delivery and business agility. While automation reduces manual effort, automated regression suites tend to expand over time, making end‑to‑end execution costly and time‑consuming. As release frequency increases, running the entire suite becomes neither efficient nor practical.

Artificial intelligence (AI) changes the equation. Instead of executing the entire test suite, AI‑driven impact analysis pinpoints the most relevant tests for each release, optimizing effort without compromising quality.

How AI Delivers Smarter Impact Analysis

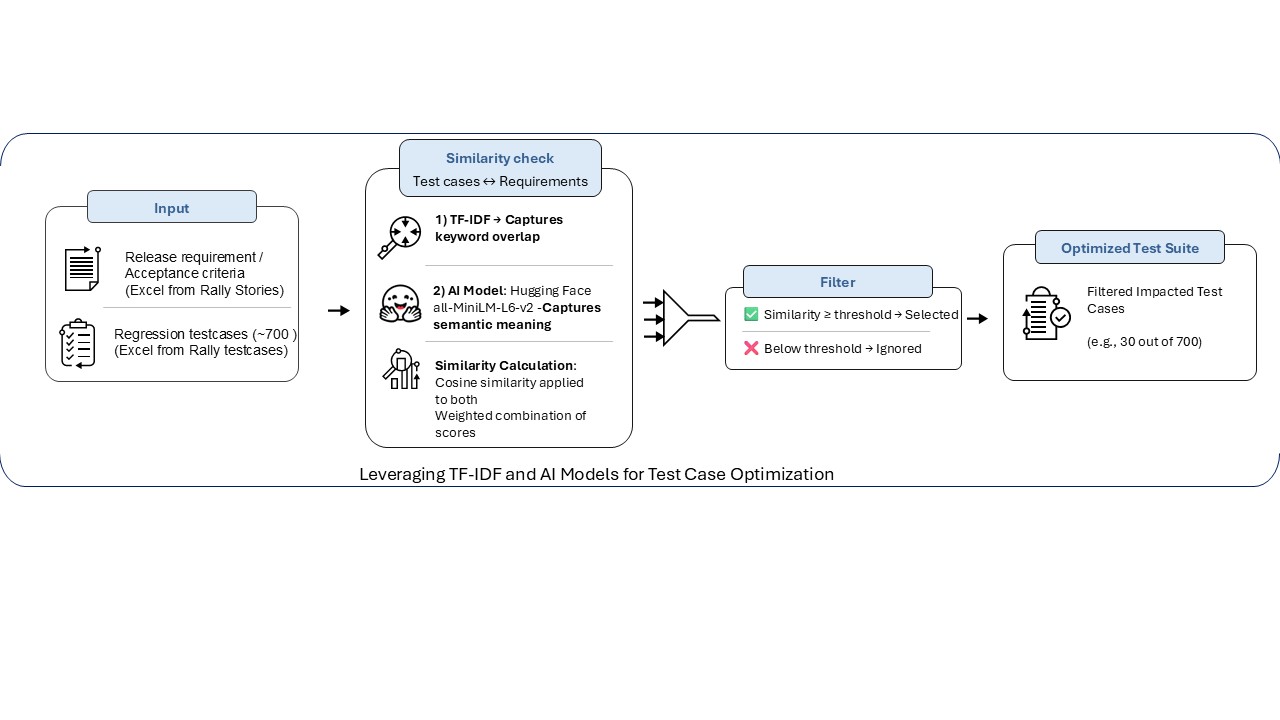

AI-driven impact analysis can fundamentally transform testing processes. By combining traditional text analysis with modern semantic understanding, intelligent systems can automatically identify which test cases matter for each release.

The hybrid approach works by blending two types of frameworks:

- Statistical methods: These include Term Frequency–Inverse Document Frequency (TF–IDF). They excel at explicit keyword, matching as well as finding direct connections between requirements and test cases based on shared terminology.

- Semantic AI models: These models can understand context and meaning. For instance, they recognize that ‘user authentication’ and ‘login security’ describe related concepts even though the exact words differ. Such AI models capture intent, not just vocabulary.

By relying on AI-based semantic understanding while preserving the precision of keyword analytics, QA teams can use impact analysis outcomes that are accurate as well as explainable, key criteria for building trust with testing teams.

Key Principles for Successful AI Adoption

Effective implementation of AI across regression testing goes beyond just running an AI model. Three principles are essential to make AI successful:

- Explainability: Testers must see why the AI model selects certain cases. Clear similarity scores and traceability help build trust

- Seamless integration: The tool should plug into existing workflows with minimal business disruption

- Lightweight deployment: Local CPU execution and quick setup drive faster adoption, while heavy infrastructure slows it down

The Open-source Advantage

While commercial AI solutions exist, many organizations are reluctant to leverage them due to high licensing costs and complex integrations. Open‑source frameworks (like Hugging Face) and Python libraries (such as scikit‑learn and Sentence Transformers) enable enterprises to build scalable, explainable, and cost‑effective in‑house AI‑driven testing solutions.

The democratization of AI empowers teams to innovate without vendor lock‑in or heavy infrastructure investments. With the right engineering mindset, even mid‑sized QA teams can deploy advanced impact analysis tools locally, ensuring security and compliance.

Finally, real-world results underscore the true power of AI. Consider how an Infosys case study highlights a US-based insurance provider leveraged an AI-driven regression testing tool to achieve 95% testing accuracy and, lightning-fast execution at reduced cost. Built using open-source AI, the tool cut a multi-day testing process into mere minutes, proving that innovation does not require big budgets.

Conclusion

Using AI in regression testing is not a passing trend but a strategic necessity. QA teams can start small, leverage open‑source technologies, and prioritize explainability. The results are clear: accelerated releases, smarter test coverage, and leaner operations.

In today’s speed‑driven market, automation accelerates testing and powers rapid execution, while AI brings intelligence to test selection by determining which tests matter the most. Together, these technologies enable efficient and optimized testing. This is why AI‑driven regression testing is no longer optional but essential for QA teams.