Introduction

Artificial intelligence has shifted from experimentation to enterprise dependency at a remarkable speed. Organizations across every sector now rely on AI for decision‑making, automation, customer engagement, and operational efficiency. Yet the structures required to govern these systems — to ensure they are safe, compliant, resilient and accountable — have not kept pace.

The result is a widening governance gap that exposes businesses to regulatory penalties, operational failures, reputational damage and strategic stagnation. As AI systems become more autonomous and more deeply embedded in critical workflows, closing this gap is no longer optional. It is a prerequisite for trust, scale and long‑term competitiveness.

The Governance Gap is growing faster than AI Adoption

AI adoption has surged across industries, but governance maturity has not followed the same trajectory. Most organizations now use AI in multiple functions, yet only a minority have established clear accountability structures, risk frameworks or lifecycle controls.

This gap manifests in several ways:

- Fragmented ownership across business units

- Opaque decision‑making and limited model transparency

- Unmanaged third‑party and vendor AI risk

- Rising regulatory exposure across jurisdictions

The challenge is not simply technical. It is organizational.

Without a coherent governance layer, AI becomes a patchwork of isolated initiatives rather than a strategic capability.

Agentic AI raises the stakes!

Agentic AI systems, capable of planning, tool use and autonomous action, have fundamentally changed the risk landscape. These systems can execute multi‑step tasks, call APIs, write code, interact with external systems and make decisions at machine speed.

This introduces new governance challenges:

- Extended action chains that obscure causality

- Expanded attack surfaces through tool integrations

- Delegation between agents that blurs accountability

- Persistent memory that accumulates sensitive data

- Machine‑speed execution that outpaces human oversight

Traditional governance frameworks — designed for static, deterministic software — are not equipped to manage these behaviors. A new architecture is required.

Recommendation: A Three‑Pillar Approach to AI Governance

A robust governance model must integrate three core pillars that reinforce one another: security, governance and compliance. Together, they form the foundation for safe and scalable AI deployment.

1) Secure — Harden the AI Attack Surface

AI introduces attack vectors that conventional security controls cannot fully address. Effective security requires:

- Zero‑trust principles for AI agents and endpoints

- Continuous adversarial testing and red‑teaming

- Provenance tracking and cryptographic versioning

- Drift detection and automated rollback

- Guardrails for agentic systems, including human‑veto gates

Security must be continuous, adaptive and deeply embedded into the AI lifecycle.

2) Govern — Establish Accountability and Oversight

Governance ensures that AI systems are deployed responsibly, transparently and in alignment with organisational values.

Key components include:

- A cross‑functional AI governance board

- A continuously updated AI use‑case registry

- A risk‑tiering framework and risk appetite statement

- Mandatory bias, fairness and performance testing

- Vendor AI risk assessments and contractual controls

Governance transforms AI from experimentation into a managed enterprise capability.

3) Comply — Navigate the Global Regulatory Landscape

AI regulation is accelerating across jurisdictions, and organizations must be prepared to meet diverse and evolving obligations.

Effective compliance requires:

- A live regulatory matrix mapping obligations to each use case

- Automated impact assessments for high‑risk systems

- Transparency disclosures and model documentation

- Continuous monitoring rather than point‑in‑time audits

- Processes for regulatory change detection and policy updates

Compliance is now a dynamic operational discipline.

Resilience: The New Core of Enterprise Security

Security, governance and compliance are essential, but they are not sufficient on their own. AI systems must also be resilient — able to withstand attacks, failures, data corruption and vendor outages without compromising business continuity.

Resilience spans three domains:

- Cyber resilience: defending against model poisoning, inference attacks and agentic compromise

- Operational resilience: ensuring critical services continue even when AI systems degrade

- Data resilience: maintaining data integrity, lineage and availability under adverse conditions

A resilient AI ecosystem can anticipate, absorb, recover from and adapt to disruption.

Sector Context shapes Governance Priorities

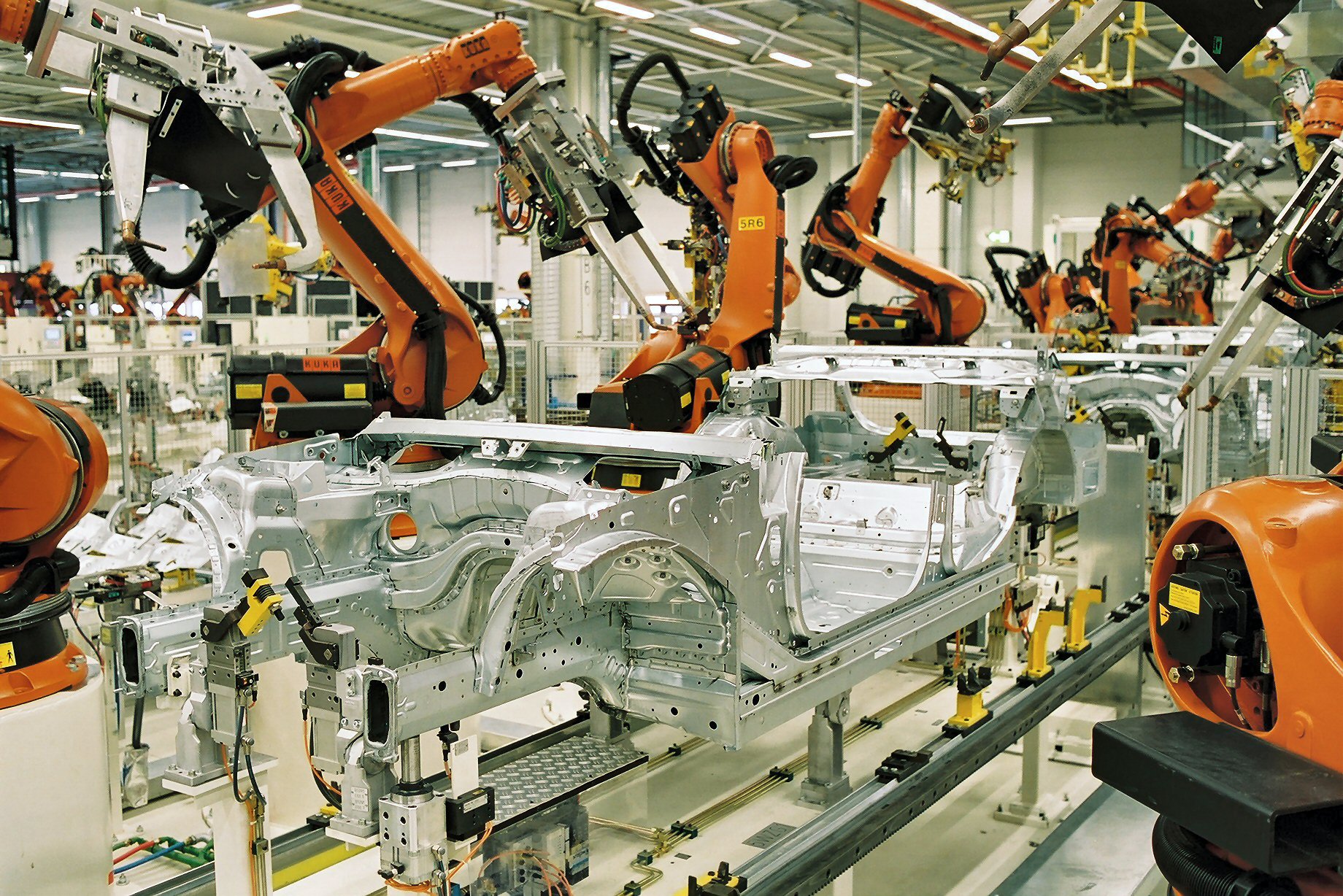

AI governance is not uniform across industries. Each sector faces distinct risks, regulatory expectations and operational constraints.

- Financial services: explainability, fairness, model risk management

- Healthcare: clinical validation, safety assurance, patient consent

- Manufacturing & OT / ICS environments: safety‑critical controls, deterministic fail‑safes, network segmentation

- Public sector: transparency, rights protection, procedural fairness

- Energy and critical infrastructure: operational resilience and safety engineering

- Retail and consumer tech: consent, bias mitigation, responsible generative AI

Effective governance must be calibrated to the realities of each domain.

A Practical Roadmap to Maturity!

Achieving credible AI governance requires a phased, structured approach:

- Foundation: Assess maturity, map use cases, identify risks and regulatory obligations

- Structure: Establish governance bodies, policies, standards and security architecture

- Control: Implement testing, monitoring, vendor assessments and operational safeguards

- Optimise: Institutionalise dashboards, audits, certifications and continuous improvement

The goal is to reach a defined, measurable and auditable level of maturity — one that enables safe scale rather than constraining innovation.

Conclusion

AI is reshaping industries, accelerating productivity and unlocking new forms of value. But without strong governance, it also introduces risks that can undermine trust, disrupt operations and expose organizations to regulatory and ethical consequences.

The organizations that will lead in the AI‑driven economy are those that treat governance not as a compliance burden, but as a strategic capability. By building secure, accountable, compliant and resilient AI systems, they position themselves to innovate with confidence — and to earn the trust of customers, regulators and society.

The governance gap is real, but it is bridgeable. The time to act is now.

Bibliography

- IBM Security — Cost of a Data Breach Report

- McKinsey — The State of AI

- World Economic Forum — Global Risks Report

- PwC — AI Jobs Barometer

- NIST AI Risk Management Framework

- ISO/IEC 42001 — AI Management System Standard

- EU Artificial Intelligence Act

- Monetary Authority of Singapore — FEAT Principles

- AI Verify (Singapore)

- IEC 62443 — Industrial Automation and Control Systems Security

- NIST SP 800‑82 — Guide to Industrial Control Systems Security

- SR 117 — Federal Reserve Model Risk Management Guidance

- FDA — AI/ML Software as a Medical Device Action Plan